HubSpot vs. Salesforce: which is right for your business?

A practical guide for SME decision-makers

Part 3 of 5: Closing the Data Gap

A company's finance director types a question into a system: "What was our gross margin last quarter?" A couple of seconds later, the answer appears:

42.3%, sourced from the financial database, last updated this morning at 6:00 AM.

A data assistant is to ensure a C-suite person can ask a simple and get a simple answer. However, the infrastructure that makes this exchange possible is not.

Behind that two-second response sits a carefully constructed system designed to translate plain English into precise database queries, retrieve information from multiple sources, verify that the person asking has permission to see it, and return an answer that can be traced back to its origin. The system must be fast, accurate, and secure. It must connect to existing data infrastructure without disrupting how that infrastructure currently operates. And it must ensure that the answer given is not invented or estimated, but drawn directly from verified company records.

This article explains how systems like the Xcelerate Business Analyst (an agentic AI assistant) achieve this, focusing on the technical foundation that makes instant, trustworthy answers possible.

When someone asks "What was our gross margin last quarter?" in ordinary conversation, the question carries implied context.

A system answering this query must make the same inferences, but it must do so using pre-agreed definitions rather than guessing. This is where the infrastructure diverges sharply from general AI tools like ChatGPT or similar large language models.

General AI models generate responses based on patterns in their training data. When asked a factual question about a specific company's performance, they have no access to that company's actual data. They might produce a plausible-sounding answer, but it would be fabricated rather than factual. We know the model is usually correct, but we care about whether the specific number it just produced is faithful to the official data (Owox, 2024).

The Business Analyst avoids this problem through a technical layer called the Model Context Protocol, or MCP. This protocol functions as a controlled interface between the AI component that understands natural language and the data systems that contain actual business information. Rather than allowing the AI to generate answers, MCP forces it to retrieve answers from verified sources.

Think of MCP as a translation service with strict rules about where information can come from. When a user asks a question, the system performs several operations in sequence.

Microsoft's Work IQ, announced in March 2026, uses a similar approach to personalise Microsoft 365 Copilot. Work IQ functions as an intelligence layer that understands context, relationships, and work patterns, making Copilot faster, more accurate, and more secure than systems built on connectors alone (Microsoft Copilot Blog, 2026).

The principle remains consistent across implementations: AI assists with translation, but answers must come from governed sources.

The semantic layer is perhaps the most critical component of the infrastructure, though it operates invisibly to end users. This layer acts as a single source of truth for metric definitions, ensuring that everyone in the organisation receives the same answer to the same question.

Without a semantic layer, different departments might define "active customer" differently. Sales might count anyone who purchased in the last 90 days. Marketing might use 60 days. Finance might include only customers with current contracts. When each team uses their own definition, the same question produces different answers depending on who processes it.

The semantic layer eliminates this inconsistency by documenting one authorised definition for each business term. When the system encounters "active customer" in a query, it applies the definition the company has formally agreed upon. This ensures that a question asked in January produces a comparable answer to the same question asked in June, assuming the underlying data has not changed.

Building this layer requires upfront work. Companies must document their metrics, agree on calculation methods, and maintain these definitions as business logic evolves. But once established, the semantic layer provides a foundation that supports not just the Business Analyst, but any system that needs to work with business metrics consistently.

According to the State of AI+BI Analytics Global 2025 Report, 38.3% of organisations now list governance frameworks and semantic layers as a top investment area (Owox, 2026). Without a governance foundation, even the most advanced AI cannot deliver reliable outcomes.

One of the practical requirements for any data access system is that it must integrate with whatever infrastructure already exists. Most mid-sized companies have accumulated a variety of tools over time: a data warehouse for centralised storage, business intelligence platforms for reporting, CRM systems for customer records, ERP systems for financial transactions.

The Business Analyst connects to these systems through read-only interfaces. This means it can retrieve data but cannot modify, move, or delete anything. The existing systems continue to operate exactly as they did before. Data pipelines, scheduled reports, and manual processes remain unchanged.

For companies using HubSpot, integration typically involves connecting HubSpot's Data Hub, which can unify data from spreadsheets, data warehouses, and other external sources (HubSpot, no date). A HubSpot integration automates data pipelines so warehouses, BI tools, and operational systems stay aligned without manual intervention.

The connection itself is established once during implementation. Technical teams configure the system to access the data warehouse, set up security credentials, and map business metrics to their corresponding database locations. After initial configuration, the system operates continuously, querying data as users submit questions.

Because the system reads from the same sources that feed existing dashboards and reports, the answers it provides align with what users would find in those tools. A finance director who asks the Business Analyst for quarterly revenue receives the same figure they would see in the monthly board report, because both draw from the same underlying data.

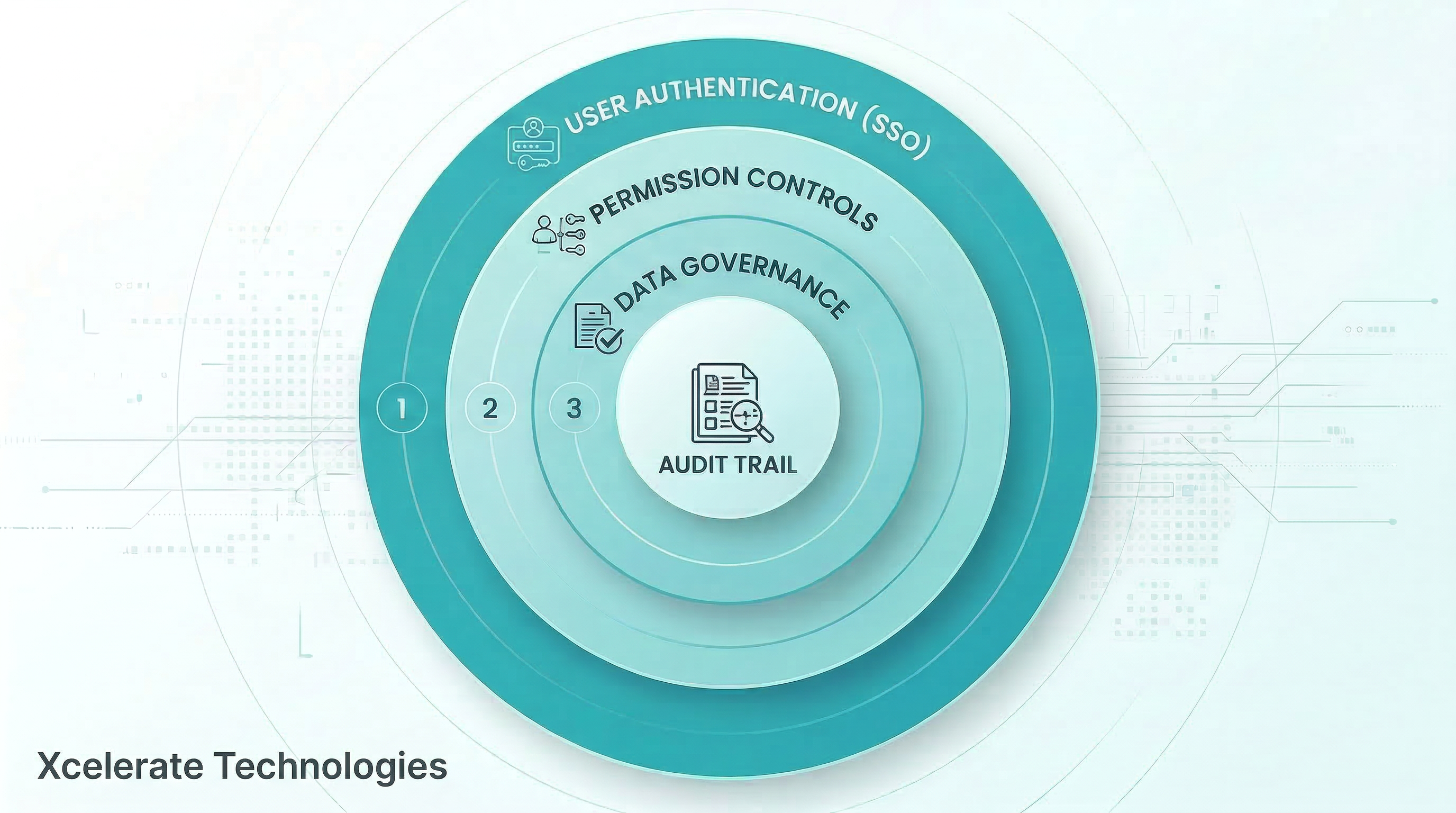

Security operates at multiple levels within the infrastructure.

Every interaction is logged. The audit trail captures the question asked, the SQL query generated, the data retrieved, the user who submitted the query, and the timestamp. This record supports both security monitoring and compliance requirements. If a question arises about who accessed specific information and when, the log provides a complete answer.

Microsoft's Work IQ demonstrates this approach by respecting customers' existing user permissions, security group assignments, sensitivity labels, and data loss prevention policies. The principle applies broadly: security layers already in place continue to function without modification.

Sensitive information such as personal identification numbers, credit card details, or other protected data can be masked or excluded from results based on governance policies. The system can be configured to return aggregated figures while hiding individual records, or to redact specific fields from query results entirely.

The infrastructure described here ensures that answers come from official sources and respect security rules. It does not, however, fix problems in the underlying data.

If the data warehouse contains incorrect information, the Business Analyst will return incorrect answers. If transactions are missing from the financial system, calculated margins will be wrong. If customer records have not been updated, counts will be inaccurate.

This is sometimes expressed as "data quality in equals data quality out." The system amplifies access to data, which means it also amplifies any quality issues that already exist. Companies implementing this type of infrastructure often discover gaps in their data governance that were previously invisible because so few people accessed the data directly.

Recent surveys found that 57% of organisations admit data reliability is a top barrier to AI adoption, and while 65% of employees believe the data behind AI is solid, 75% of data leaders say those same employees need serious up-skilling in data literacy (Indeed, 2025).

Addressing data quality requires separate work: validating that source systems capture accurate information, establishing processes for regular data cleaning, documenting known limitations or gaps, and training users to interpret results correctly. The Business Analyst can surface these issues more quickly than traditional reporting methods, but it cannot resolve them automatically.

For organisations considering implementation, the technical requirements can be summarised as follows.

The system requires a language model capable of understanding natural language and generating structured queries. Current implementations use models from providers like OpenAI, Anthropic, or open-source alternatives, selected based on performance, cost, and data residency requirements.

It requires an MCP client implementation, which handles the translation between natural language and database queries while enforcing the rules about where data can come from.

It requires access to a centralised data warehouse where business information is stored. Common platforms include Snowflake, Google BigQuery, Amazon Redshift, or Microsoft Azure Synapse. The warehouse must be configured with appropriate securityand permission controls.

It requires a semantic layer that documents business metrics and their calculation methods. Companies starting from scratch can begin with a simple metrics dictionary stored in structured files. More sophisticated implementations use dedicated semantic layer tools like dbt Metrics, Cube, or MetricFlow.

It requires backend infrastructure for orchestration, audit logging, and optional caching to improve response times for frequently asked questions. This typically involves a Python-based API layer, a database for logs, and potentially a caching system like Redis.

Finally, it requires an interface through which users interact with the system. This can be a web dashboard, integration with messaging platforms like Slack or Microsoft Teams, or both.

Implementation timelines vary based on how much of this infrastructure already exists. Companies with centralised data warehouses and documented metrics can complete initial setup within a few weeks. Companies that need to consolidate data sources or formalise their metric definitions first should expect longer timelines, though this preparatory work delivers benefits beyond just enabling the Business Analyst.

The technical foundation described here exists to support a simple user experience: ask a question, receive an answer, trust the result.

The complexity remains hidden, for good reason. Users do not need to understand MCP, semantic layers, or database query languages. They do not need to know which tables contain which information or how permissions are enforced. They simply ask questions in the same way they would ask a colleague.

But beneath that simple interaction sits a carefully designed system that ensures answers are accurate, sourced from official data, restricted to authorised users, and traceable back to their origin. This combination of simplicity for users and rigour in the infrastructure is what makes instant, trustworthy data access viable at organisational scale.

This article has explained the technical foundation that makes instant answers possible. The next article in this series moves from theory to practice, presenting specific examples of how different teams use this capability and what decisions it enables.

The Xcelerate Business Analyst implements the infrastructure described here, connecting to existing data systems through MCP, applying consistent metric definitions, and returning answers that can be verified and traced. Understanding how the technology works helps clarify both its capabilities and its requirements.

Learn more about data and reporting

A practical guide for SME decision-makers

Discover HubSpot Breeze, the AI layer that enhances marketing, sales, and service operations. Learn its features, structure, and pricing for...

A practical guide for professional services leaders evaluating HubSpot

Be the first to know about new B2B SaaS Marketing insights to build or refine your marketing function with the tools and knowledge of today’s industry.